Pushing the limits of visual navigation in ultra-sparse environments

Share article

As mentioned in our previous post, a key blocker to autonomy for moving robots is the narrow nature of existing navigation stacks, which limit generalization across platforms.

Even for well-engineered perception stacks, performance degrades sharply in visually sparse environments. Most current solutions rely heavily on classical approaches and domain-specific assumptions, causing them to fail in real-world settings that lack uniquely identifiable visual features, such as clear landmarks or high-contrast lines.

Two weeks ago, in the Ocotillo desert, we set out to push our transformer-based visual navigation system to its operational limit. In the process, we achieved a remarkable breakthrough: ultra-low (<100 ft AGL) flights over desert terrains with almost no identifiable features. Crucially, this entire feat was carried out running in real-time on a limited compute node with a narrow FOV camera.

12 meter error at 75 ft AGL

Here are the key results across dozens of long-duration flights over the Ocotillo desert:

- <30m of positional error sustained across entire flights

- <5m of error during multiple sustained segments

- Altitude as low as 50 feet AGL, at speeds up to 60 mph

- All generated live without post processing

Flight 2:

Flight 3:

Why was this impossible?

Low altitude and sparse terrain are inherently brutal for vision-based localization. The closer a system flies to the ground, the fewer distinct features its camera can resolve, the faster the visual field shifts, and the harder it becomes to determine a position relative to prior observations. At 50 feet, this problem is severe. Existing solutions struggle here precisely because the low-altitude visual field lacks the distinct features required to match against maps built from high-altitude or satellite orthophotography.

Flying low over feature-sparse terrain compounds the three hardest challenges of visual navigation simultaneously:

- Reduced Visual Signal: Desert and scrubland lack the visual features that most navigation systems depend on, causing classical methods to drift, repeat, and fail when the ground is a near-uniform mosaic with nothing to anchor localization.

- Constrained Field of View: Lower altitude naturally shrinks the patch of ground visible in the frame at any moment. This lack of context, coupled with fewer distinct features, makes the localization task significantly harder and makes provided satellite maps difficult to use due to the difference between orthogonal and vehicle imagery.

- Extreme Real-Time Compute Demand: High air speed (20–60 mph) combined with a narrow FOV means frames change rapidly, demanding exceptional real-time performance. Poses must be estimated fast enough to be actionable—all onboard on a sub-$1k edge compute module.

Our system handled all three simultaneously, achieving state-of-the-art performance (benchmarking results to come).

Test Environment & Platform

Working closely with leading OEMs, we developed a trainer platform that matches their typical flight dynamics, sensors, and compute constraints. We used a fixed rolling shutter camera on the same compute node many of our OEM partners use in production today and selected a narrow FOV camera to ensure compatibility with existing sensor payloads.

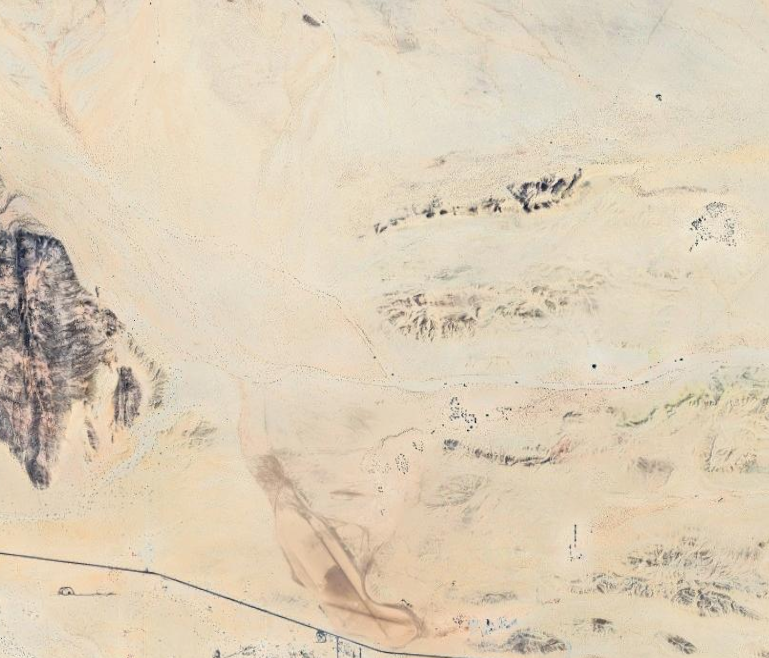

We flew the platform near Anza-Borrego Desert State Park for two weeks, characterizing performance across a variety of flight envelopes. The data collected ranged from 50–400 ft AGL, flown at various times of day between sunrise and dusk, at aircraft ground speeds ranging from 20–60 mph. Each flight covered 6–10 miles due to the limited range of the platform.

The terrain itself was a deliberate challenge: sparse desert looks nearly identical from frame to frame, a repetition of sand, scrub, and rock with few landmarks to anchor on. Traditional approaches fail because they were designed for environments that cooperate (rich visual texture, strong GPS signal, high-quality sensors). We deliberately chose the environment where those assumptions fall apart.

A new approach to visual navigation

Classical navigation systems rely on hand-crafted feature detectors—algorithms tuned to identify and match specific visual patterns like edges, corners, and textures between frames. While this works in visually rich environments, it falls apart in sparse, low-altitude terrain. The features these systems are tuned to find simply aren't present, or when they are, they are ambiguous (the same scrub brush, the same sandy wash, repeating endlessly). Classical approaches have no way to reason through this ambiguity, causing them to lose track, drift, or fail outright.

Tera's system replaces that brittle foundation with a fundamentally more capable learned approach. By utilizing foundational models trained on extensive image datasets, in conjunction with a specialized custom data pipeline, our system integrates drone camera imagery with real-time 3D reconstruction to achieve much higher generality and performance. This combination allows our system to accurately determine the drone's 3D pose relative to a known GPS starting point. The result is a system capable of consistently maintaining an accurate positional estimate across consecutive frames, even when maps aren't always readily available.

Software-only integration

A navigation system is only as valuable as its ability to slot into real-world operations. Tera's solution integrates directly with our soon-to-be-publicly-available fork of QGroundControl, one of the most widely adopted ground control systems for UAV operations. This makes it immediately usable by operators without changes to existing workflows or hardware.

Our design philosophy focuses on simplicity: no proprietary ground station, no custom flight controller, just drop-in software intelligence for existing platforms.

Here’s a screen recording of our live tests with our software running on the QGC fork:

The entire pipeline runs in real time on a single Nvidia Orin NX mounted onboard, with plans to move to even smaller and more power-efficient chipsets. We deliver metric-accurate pose estimates in real time, in-flight, with no cloud dependency and no GPS. This means our system works in RF-denied corridors, contested airspace, and remote/sparse terrain where traditional navigation stacks simply go dark.

What’s Next

Navigating real-time in difficult operating envelopes is just the beginning. In our upcoming blogs, we plan to showcase the next stages of development: generalization across platforms, sensors, and domains – from ground to air. We are separately developing a maritime solution for surface vessels.

We're building the navigation layer for the next generation of autonomous platforms.